Testers getting up shots on a beta machine & software that was stationed at their school for a number of months for testing.

Building a Beta program that de-risks launches

Problem: We were shipping into the real world without testing in the real world

What I focused on: Creating a lightweight, high-impact way to validate software and hardware before launch

What changed: Built a field-tested beta program that cut bugs in half and stopped a bad launch

My role: Beta strategy, program design, operations, cross-functional execution

PROBLEM

We weren’t testing our software and hardware in real environments. Internally, we checked specs and ran regression tests, but all of it happened in a bubble. After several hot-fix-worthy releases and a failed alpha test of a new product, it became clear that internal testing wasn’t enough.

This ran the risk of affecting our reliability reputation and wasting resources creating features that wouldn’t work in the real world. It created rework, wasted effort, and a constant cycle of fires after releases.

If we didn’t fix this, we could be burning time and credibility shipping problems we could have caught earlier. Worse, we were missing a huge opportunity to learn from real users before it was too late.

DEFINE

We needed to decide what kind of beta program we were building. How strict should it be? Do we require NDAs? What’s the ask of testers? What do we do if feedback is negative? And are we truly willing to pause a launch if the product isn’t ready?

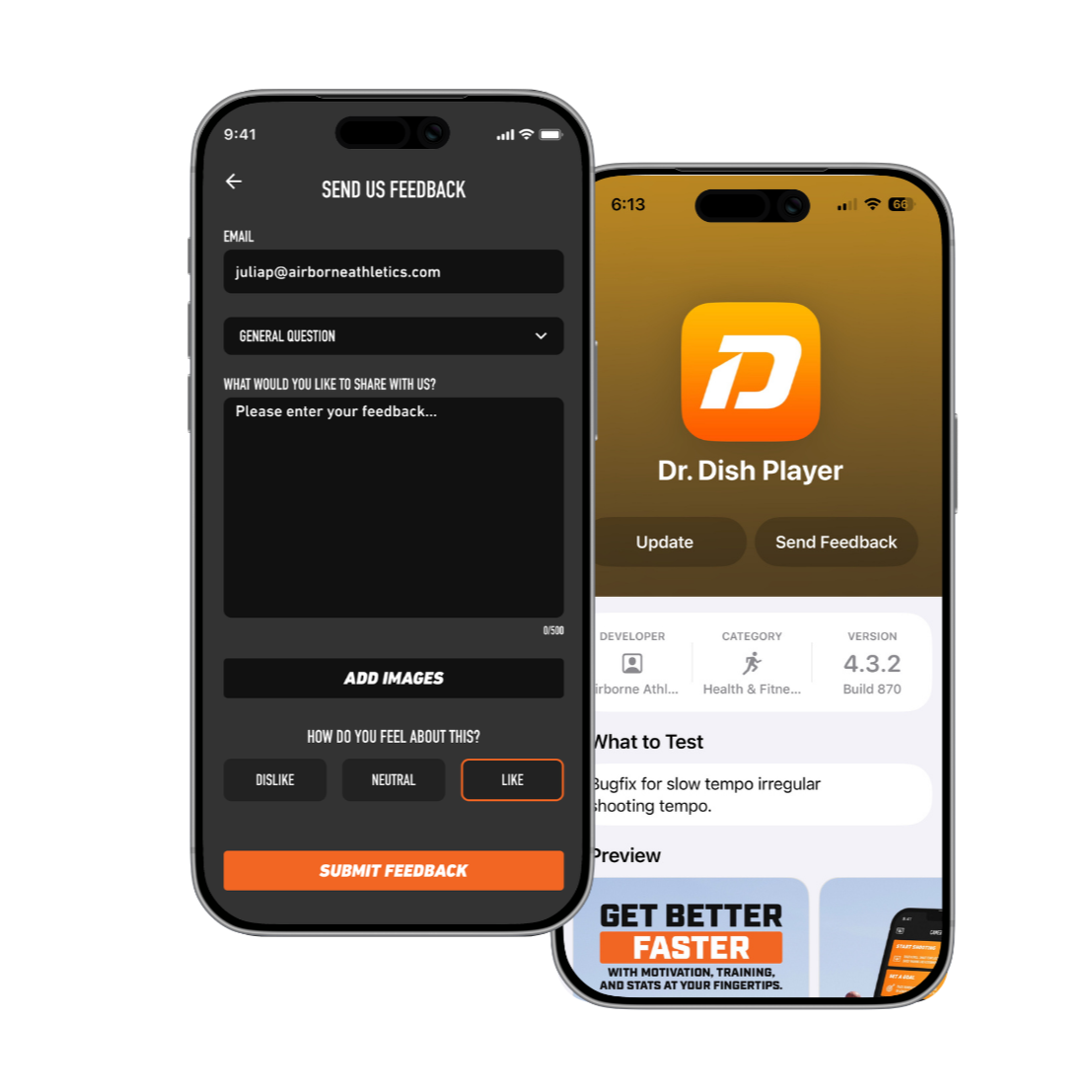

Because we were starting from almost nothing, I pushed for a lightweight approach. I recruited beta testers from our existing customers, gave them access to builds, and created a simple in-app feedback form using our existing support infrastructure. The goal was to start small, learn fast, and lower the barrier to participation.

We intentionally did not add heavy process or legal friction. No NDAs. No complex onboarding. The best outcome of beta is usage, so we focused on creating space, time, and easy paths for people to try new things and tell us what broke.

Example of the feedback form in app and beta listing in Testflight

SOLUTION

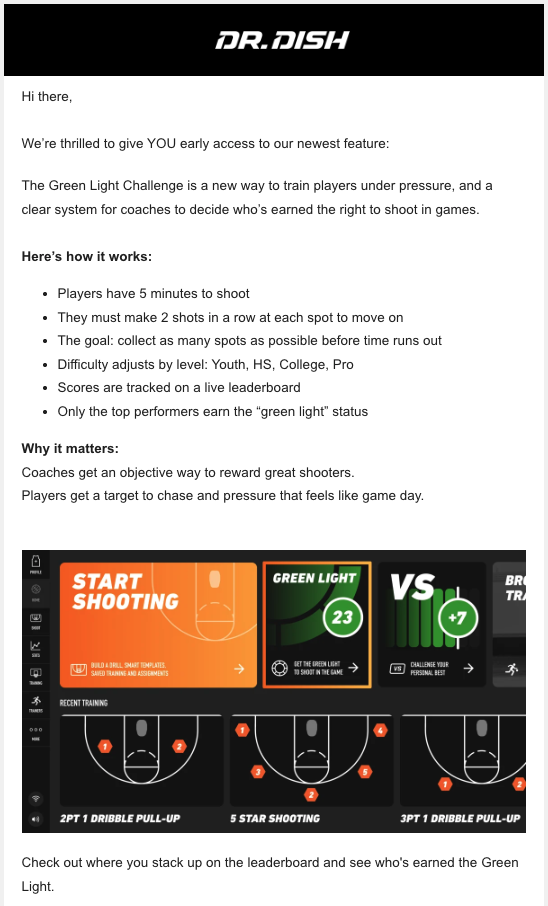

I eventually built a beta program with 500 software testers, a repeatable beta release process, clear communications, and streamlined feedback collection. We also created structured beta workflows for each new hardware launch.

I personally recruited all software testers. For hardware, I hand-delivered machines to build trust and provide context, checked in frequently, and gathered feedback directly from the field. I also created a reporting cadence to synthesize findings and share them with product, engineering, and leadership to catch issues early & often.

The main tradeoff was time. We spent more time testing before launch, but that time paid for itself many times over by catching critical issues early. The program also effectively expanded our testing surface from one internal tester to hundreds of real-world users, without slowing the team down.

Tester getting up reps on a beta machine that was left at this facility over the course of a couple months. This particular machine got up to ~20,000 shots during testing.

RESULTS

Software bugs reported to service immediately after releases dropped by almost half

We halted the launch of a new product that didn’t meet real-world standards

We significantly reduced release risk and post-launch fire drills

For the business, this increased confidence and protected our credibility. For users, it meant more stable products and fewer support issues. Internally, it freed up time to focus on real product development instead of emergency fixes and raised the bar for what “ready to release” actually meant.

Example of Beta sticker I designed to place on machine and acquire feedback from players in the moment via QR code.

Email sent to Beta testers encouraging engagment.

MY ROLE

I owned the beta program end-to-end, including:

Recruiting testers

Managing access and permissions

Delivering physical machines

Creating beta materials and guidance

Collecting feedback

Distributing insights to product, service, engineering, and leadership

Managing ongoing customer communication

For software, I decided when we needed more testers and how we communicated releases. For hardware, I set the beta timelines, defined the ask of testers, coordinated on-site visits, and led feedback collection.

I worked closely with software and mechanical engineers to make sure testing answered the questions they actually needed to feel confident shipping, and to turn feedback into actionable decisions.

Personally dropping off beta machines at testing locations.

WHY I’M SHOWING THIS WORK

This project shows how I build clarity and momentum when there isn’t an existing playbook. I defined the right level of rigor, adjusted when things didn’t work, and turned a vague goal (“we should do beta testing”) into a system the company relies on.

It highlights that I:

Thrive in ambiguity and build structure where none exists

Partner closely with engineering to turn feedback into better decisions

Design processes that balance speed with quality

Advocate for both the business and the customer

Turn real-world insight into safer, stronger launches

Details have been intentionally generalized or modified to respect confidentiality and intellectual property agreements. This case study focuses on my role, process, and decision-making rather than proprietary implementation details.