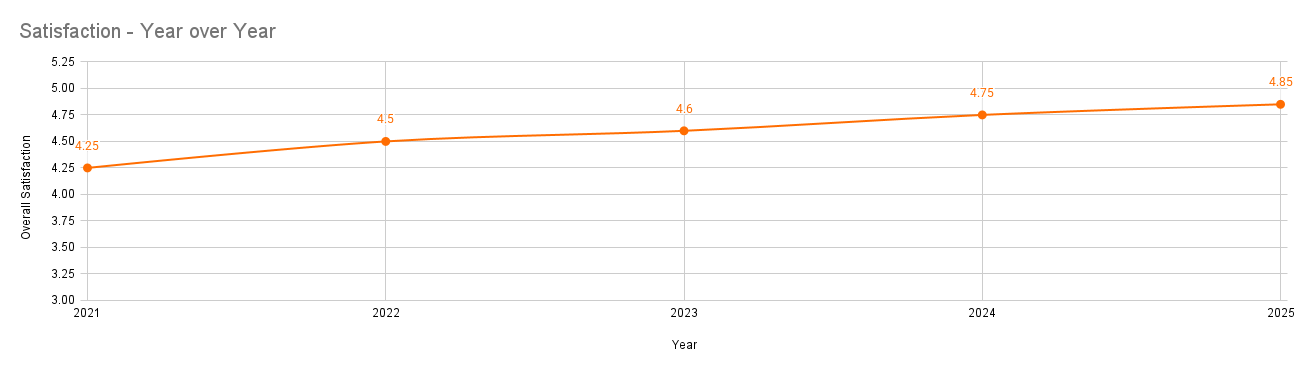

example image only and not representative of actual satisfaction scores

Building a customer satisfaction collection system that guides product roadmaps

Problem: We didn’t really know how satisfied our customers were or why

What I focused on: Creating a simple, repeatable way to measure and understand satisfaction over time

What changed: Turned “gut feel” into a longitudinal insights system used across the company

My role: Research strategy, survey design, insights synthesis, executive communication

PROBLEM

We didn’t know whether our customers were satisfied with their purchase. Outside of reviews and anecdotal feedback, there was nothing concrete to go off of. That meant we were making big product and business decisions without a clear understanding of what customers loved, tolerated, or disliked.

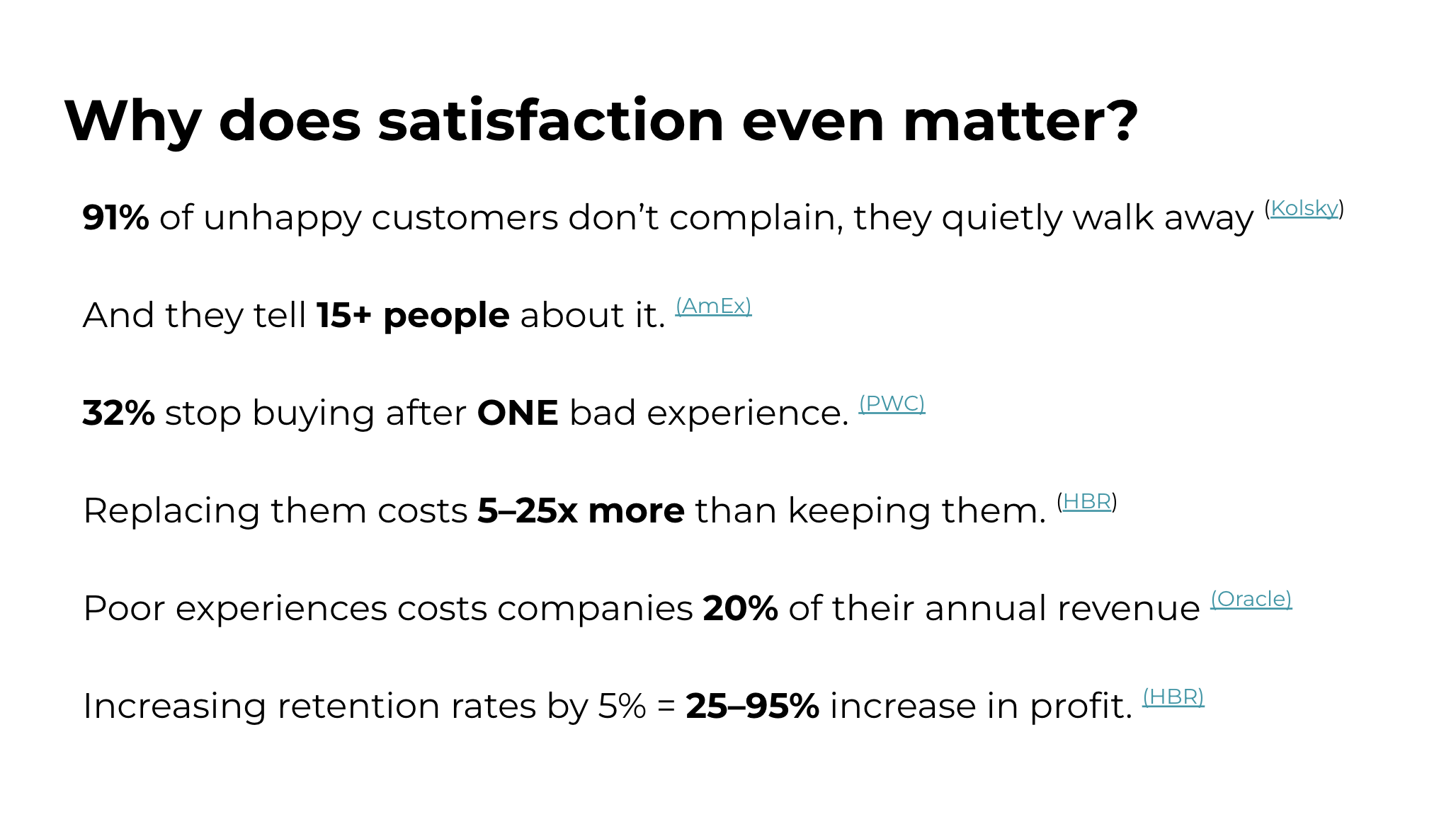

Satisfaction isn’t a “nice to have.” When customers see value in what they buy, they become advocates. They renew, they recommend, and they make your product gain traction in ways a company couldn’t without their support. Without a consistent way to measure satisfaction, we were risking time, energy, and roadmap bets on the things that might not move the needle.

Slide I always include when sharing CSAT results, making it clear why customer satisfaction is so important.

DISCOVER

Before choosing a solution, I needed to understand what kind of signals would help us make business decisions. I wanted to understand satisfaction across ownership length, usage patterns, feature adoption, and perceived value.

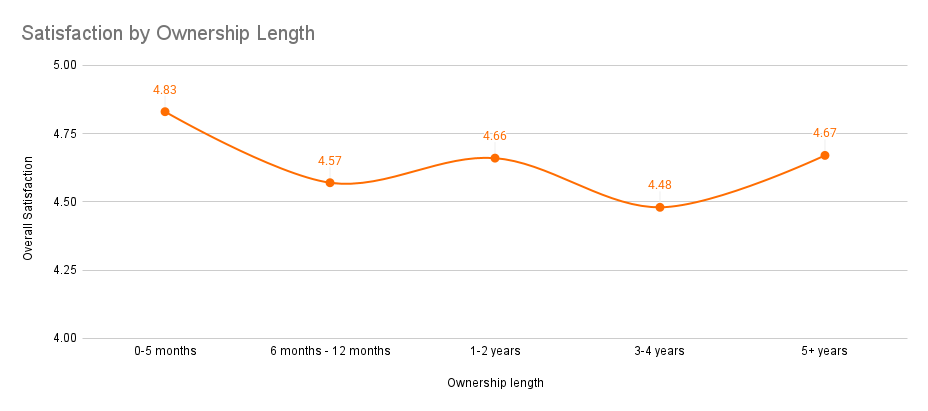

Satisfaction changes over time. People are often happiest right after purchase, so we needed to hear from customers at different points in their ownership journey to get the whole picture.

*example data used*, however, it is very typical for user satisfaction to drop over the course of ownership length due to the novelty of a new purchase wearing off.

What surprised me most was how much insight you can get from a small, focused survey. With just 5–10 well-chosen questions, we could uncover patterns that were both deep and actionable. I also learned that this kind of data doesn’t go stale as quickly as I expected. Over time, these surveys became invaluable snapshots of how customer sentiment evolved year over year.

DEFINE

We needed to decide who to survey, what to ask, and how often to do it. We also had to decide whether this would be a one-time effort or something more long-lasting.

I chose to run a single survey with one customer segment to validate the approach before scaling it. We kept the survey tight and centered on satisfaction, customer context, and usage patterns. Stakeholders suggested adding more questions, but I pushed to keep it lightweight so we could maximize completion and clarity.

SOLUTION

I built a simple satisfaction survey that captured how customers felt about the product and how they used it. It was easy to distribute using free tools and straightforward to complete. We sent it to our existing customer base and synthesized the results into a clear, actionable report.

First lightweight survey using Google Forms

Over time, this evolved into:

Example of the automated survey email sent to customers at different ownership lengths.

Larger, more formal annual satisfaction surveys for our two major audiences

Automated surveys triggered at different ownership milestones

A recurring insights report that informed product, marketing, and sales decisions

These surveys became a consistent pulse check on our customer and user base and gave us concrete success metrics to track year over year. While surveys aren’t a complete picture on their own, they gave us a reliable starting point and helped us identify where deeper investigation was needed

We kept the structure consistent so results could be compared year over year. The goal wasn’t to treat the data as gospel, but to build a durable feedback loop that made the company smarter over time.

RESULTS

Established year-over-year satisfaction benchmarks

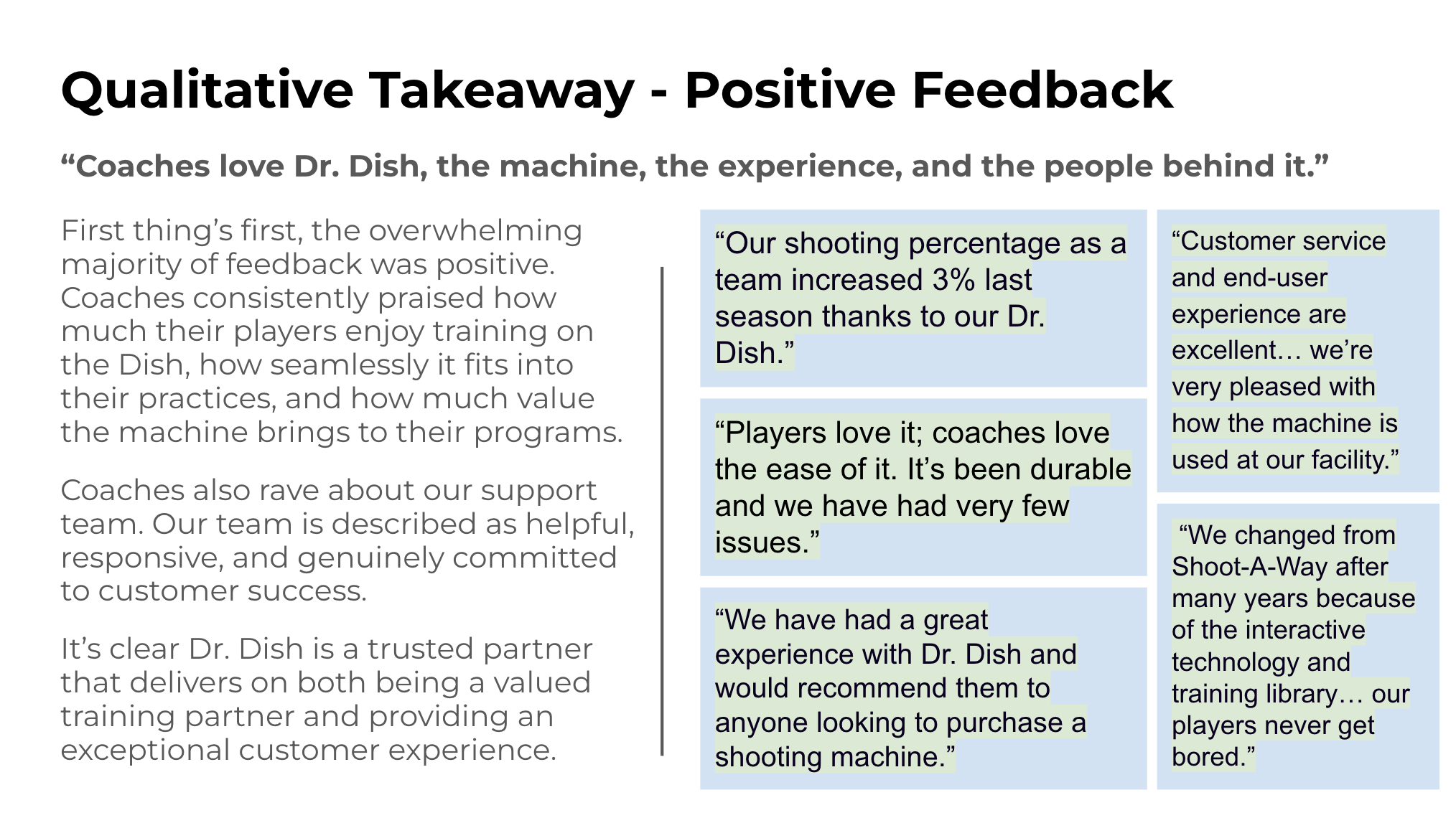

Collected qualitative feedback that directly shaped roadmap decisions

Launched multiple company-wide initiatives based on survey insights

Informed product strategy, marketing campaigns, and sales messaging

For the business, this created a shared source of truth about customer sentiment. For customers, it created a clearer feedback loop and a stronger sense that their experience actually mattered.

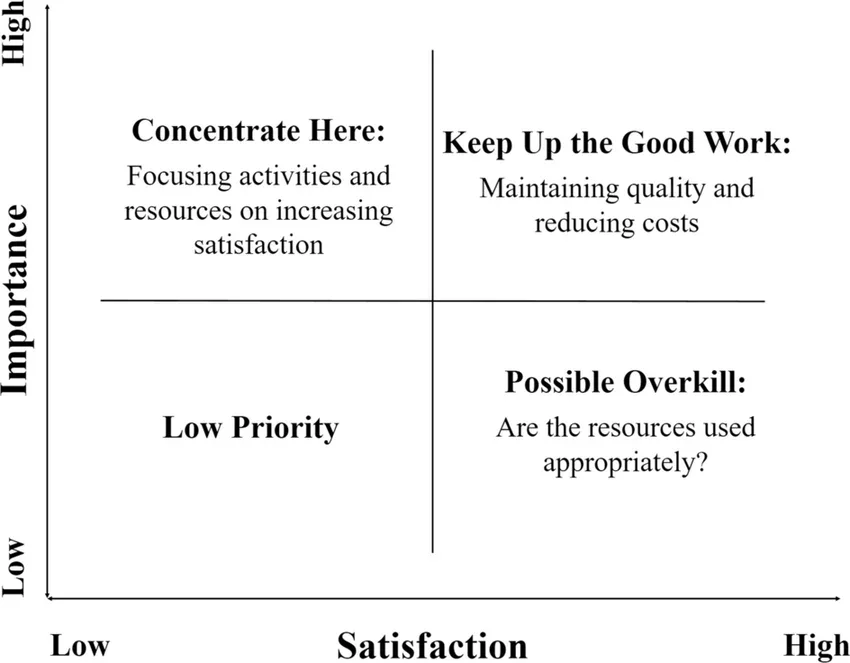

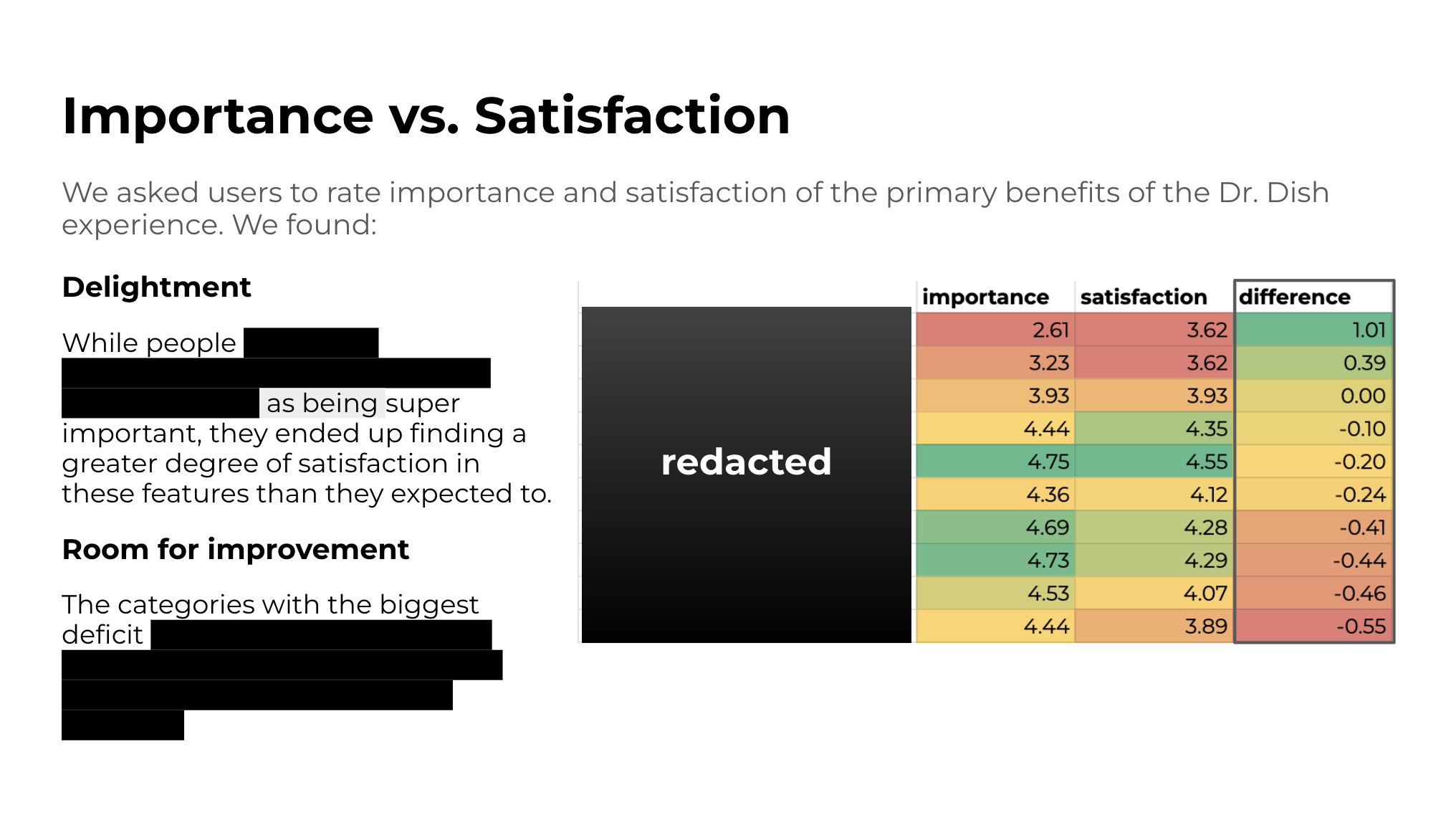

*redacted to protect company data*; You can see how I was able to make it clear which features were the most important to users and which features provided the greatest level of satisfaction. When features were not particularly important but satisfied customers, we considered them delighters. When features were important but did not satisfy customers, those were areas for improvement.

MY ROLE

I owned this program end-to-end, including:

Designing the survey

Getting leadership buy-in

Recruiting participants and managing communication

Organizing and analyzing the data

Synthesizing insights into a clear report

Distributing findings and tracking qualitative feedback

I turned this into a recurring, longitudinal program and added additional touchpoints across the ownership lifecycle. I worked closely with product, sales, and marketing to make sure we were targeting the right audiences, asking the right questions, and turning insights into action.

Slide from the 45 page report showing how qualitative feedback is presented

WHY I’M SHOWING THIS WORK

This case study shows how I build durable insights systems, not just one-off studies. I start small, prove value, and scale what works. It reflects how I think about signal vs noise, long-term measurement, and using research to shape real decisions, not just presentations.

It highlights that I bring:

Longitudinal, systems-level thinking

Strong synthesis and exec-facing communication

A focus on building infrastructure, not just running studies

Details have been intentionally generalized or modified to respect confidentiality and intellectual property agreements. This case study focuses on my role, process, and decision-making rather than proprietary implementation details.