Observing users in their environment to create features that stick

Problem: We didn’t understand what practice looked like for players using our product at home, making it difficult to prioritize the roadmap or market the product effectively.

What I focused on: Getting direct visibility into real at-home usage through contextual field research, then translating those behaviors into clear product opportunities.

What changed: Replaced assumptions with real user behavior, leading to two high-impact features that became core to the home experience and key drivers of engagement and messaging.

My role: Led the work end-to-end, from designing and conducting in-home research to defining, validating, and launching features in partnership with design, engineering, sales, and marketing.

PROBLEM

As we tried to plan our roadmap more strategically, we realized we had a major blind spot: we didn’t actually know what it looked like when players used our product at home. That made it hard to decide what to build and to market the product clearly to families considering a purchase.

We were guessing about almost everything. What does a practice session look like? Are parents or siblings involved? Can players set up the machine themselves? Are they following routines or improvising? What do their environments look like? Without answers to these questions, we risked building features that didn’t matter and marketing messages that didn’t resonate.

If we didn’t spend time exploring this, we would keep spending precious R&D time and money on ideas that sounded good internally but didn’t move the needle for real users.

We had conducted many interviews with players and parents, but it was difficult to truly understand their setup and the context in which they practiced without seeing it firsthand.

DISCOVER

To choose the right direction, we first needed to understand real behavior end-to-end. From getting the machine out of storage, setting it up, running a session, to packing it away, the flow could break at any point, and we wouldn’t know why. We also needed to understand usage patterns: short vs long sessions, solo vs group practice, driveway vs court setups, power constraints, and how much structure players already had.

I set up and ran contextual inquiries in multiple homes. By traveling to actual users' houses, I was able to ask families to set up the machine and practice like they normally would, while I observed and asked questions. Some specific themes were around:

Transportation and setup friction

Session length and intent

Player-driven training routines vs parent, coach, or trainer-driven training routines

Parental influence on motivation and training frequency

Goal setting

Competition and usage amongst family members and teammates

What surprised me most was how self-directed these players were. I had assumed younger players (10–14) would struggle to practice on their own. In reality, if a family had invested in a shooting machine, the player was usually already highly motivated, capable of setting up the machine, and serious about improving.

One participant setting up the machine in their backyard.

DEFINE

We needed to decide what features would best support what players were already doing at home. There were many possible directions, and many different environments and use cases, so narrowing the list was the real challenge.

I recorded, transcribed, and tagged all sessions, then synthesized the findings into a clear set of opportunity areas. We chose to focus on two features that would help players do more of what they were already doing, just more easily and more consistently.

We validated both concepts with usability testing, cut functionality that wasn’t pulling its weight, and made key flows more flexible to support different home setups. We also chose to focus on software first, since it was faster and lower risk, while capturing hardware insights for future form-factor changes.

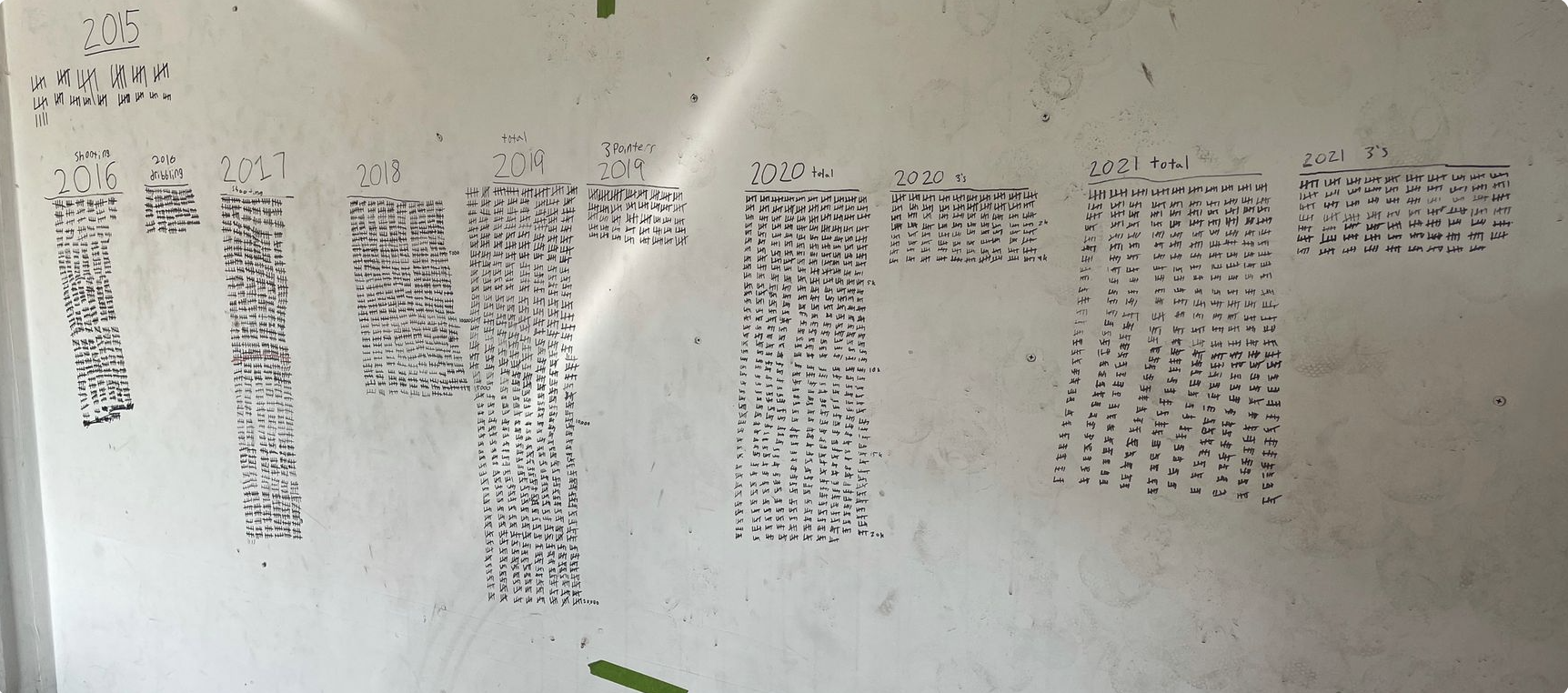

How one young player tracked all their made shots over the years by tallying on their garage wall.

SOLUTION

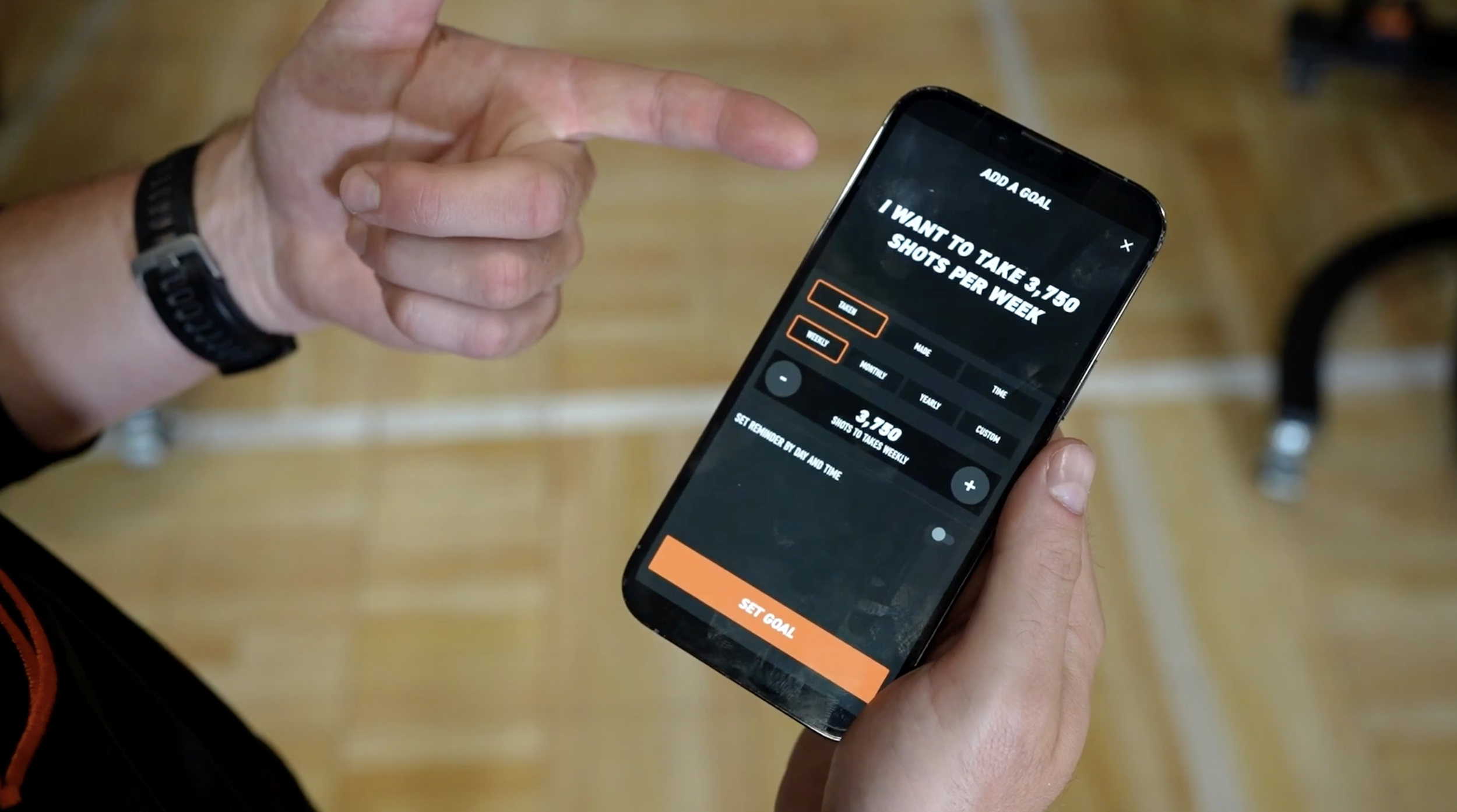

Goal Setting

Players could enter shooting goals in the app and have all shots, makes, and time practiced automatically logged toward short and long-term goals. Many players were already tracking this on paper or on their walls. We made it effortless and added motivational reminders to help them stay consistent.

Multiplayer Mode

We added a way for multiple people to practice together and track scores separately. This created built-in competition among family members and teammates and made sessions more competitive and engaging.

With a small dev team, we kept both features tightly scoped. We started simple, then layered in additions like notifications, guest shooters, and winners after seeing strong adoption. The goal was to get these features into the wild quickly, learn from real usage, and invest further only once they proved their value.

RESULTS

These two features became cornerstones of the home product experience

Multiplayer sessions consistently represented a meaningful slice of overall usage

Thousands of players set goals in the app

Both features became central to sales talk-tracks and marketing messaging

For users, this made the product feel like a true training partner, not just a machine. Families could see progress, maximize usage, and make training more fun and motivating. For the business, these features became clear differentiators, strengthening the product’s value.

MY ROLE

I owned this work end-to-end, including:

Recruiting participants and scheduling sessions

Running and recording field studies

Tagging, synthesizing, and presenting insights

Defining feature direction and prioritization

Writing product requirements

Partnering with design on solutions

Conducting usability testing and iteration

Writing notification copy and logic

Testing with engineering before launch

Creating GTM briefs for Sales and Marketing

WHY I’M SHOWING THIS WORK

This case study shows how I use real-world behavior to drive product decisions instead of assumptions. I start with messy, contextual research, synthesize it into clear opportunities, and turn that into features with measurable impact.

It highlights that I:

Lead with user needs, not internal guesses

Turn qualitative research into a product strategy

Make focused, high-impact bets with small teams

Balance discovery, delivery, and adoption

Partner across product, design, engineering, sales, and marketing

Details have been intentionally generalized or modified to respect confidentiality and intellectual property agreements. This case study focuses on my role, process, and decision-making rather than proprietary implementation details.